Every time I tap a target on the Seestar app, my phone tells the telescope what to find, but not where it is. The S50 figures that part out itself: it slews in the rough direction, takes a calibration frame, asks “where on the sky was this taken?”, and adjusts. Three seconds, one re-pointing nudge, target centered.

Plate solving is the answer to that one question: given an arbitrary image of stars, recover the pointing, scale, and rotation. The dominant open-source approach, astrometry.net, was published by Lang, Hogg, and collaborators in 2010 and has quietly become the unsung backbone of consumer astrophotography. Every smart telescope on the market today, from the Seestar S50 to the Vespera Pro, the DWARF 3, and the Celestron Origin, runs some variant of this in real time.

This post walks through the algorithm from the bottom up, because the smart-telescope category genuinely doesn’t make sense without it. If you’ve ever wondered why a $500 alt-az mount with a mediocre stepper and zero polar alignment can reliably find M81 on the first try, the answer is plate solving, not better hardware.

The blind problem

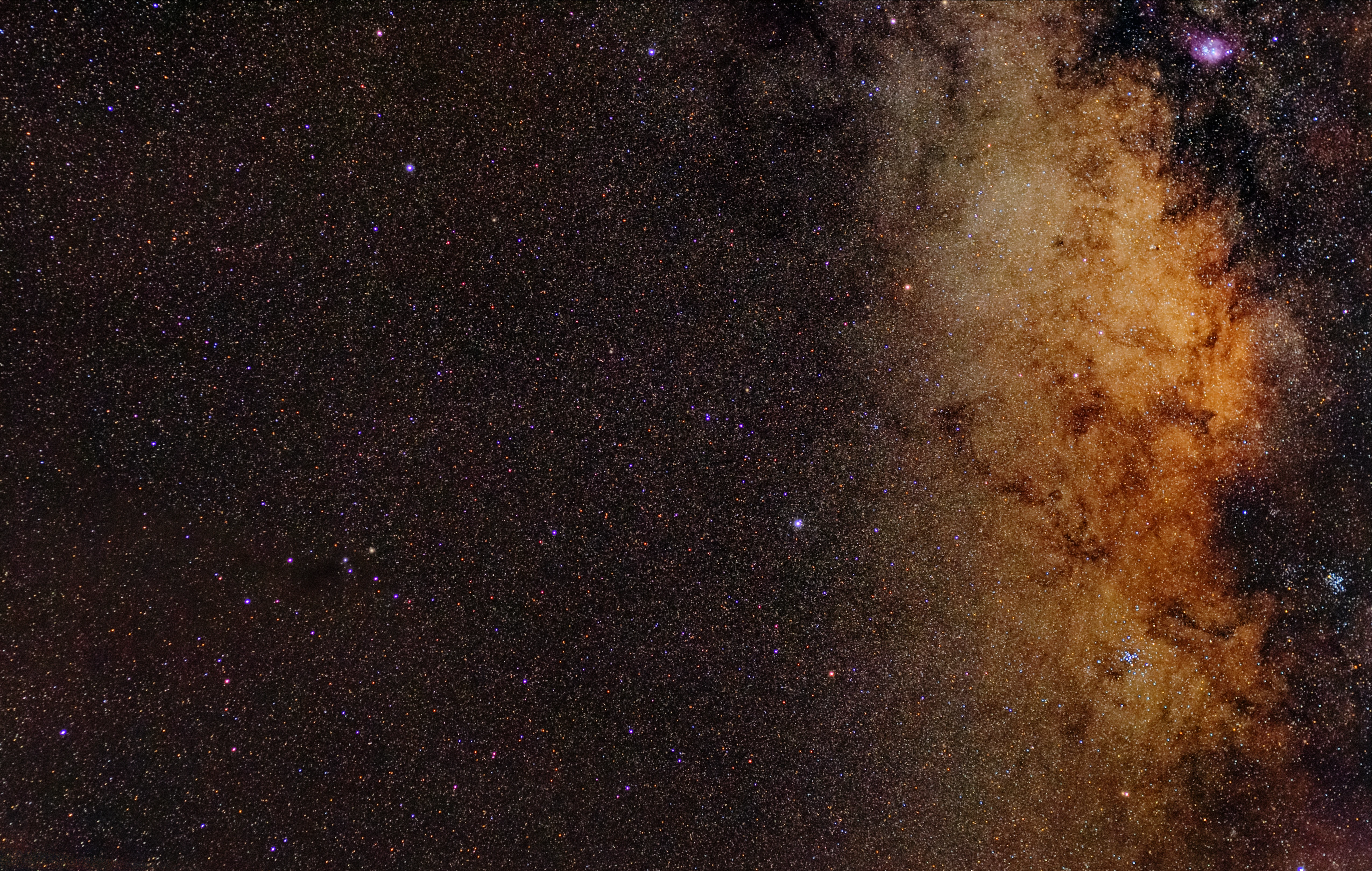

Forget the telescope for a second. Imagine I hand you a square photo of a few dozen stars and ask: where is this in the sky?

If you don’t know the camera’s pointing, scale, or orientation, you’re solving blind astrometric calibration. It’s harder than it sounds. A typical wide-field image catches 50–200 stars. The catalog (Tycho-2, UCAC, Gaia DR3) has hundreds of millions to billions. You can’t match every detected star against every catalog star: that’s a combinatorial blow-up, and the brute-force search space is roughly the celestial sphere times all rotations times all plausible scales. Worse, you don’t know which detected sources are stars at all. Cosmic rays, hot pixels, satellites, and faint background galaxies all show up as point sources to a star detector.

Pre-2010, “GoTo” telescopes ducked the problem. They asked you to polar-align the mount, slew to a known bright star, and confirm the offset. Repeat for two or three stars and the mount’s controller could derive the pointing model. The whole ritual took 10–20 minutes and broke the moment a tripod leg shifted. It’s why generations of beginners gave up on astrophotography after their first few sessions.

Plate solving rewrites the contract. The mount’s mechanical accuracy stops mattering. You take a picture; the picture tells you where it was taken. Then you correct.

The astrometry.net trick: quads

The astrometry.net insight is to abandon star-by-star matching entirely and match patterns of stars instead. Specifically, asterisms of four stars (what the paper calls “quads”).

For any four stars, you can build a hash code that’s invariant under translation, rotation, and scaling. Those three transformations account for almost everything you don’t know about the image. Here’s the construction:

- Take the four stars. Find the two that are farthest apart. Call them A and B.

- Use AB as your local axis. Place A at (0, 0) and B at (1, 1) in a rotated, rescaled frame. This fixes the scale and rotation.

- The remaining two stars, C and D, now have specific (x, y) coordinates in this AB frame.

- The 4-vector (x_C, y_C, x_D, y_D) is your hash.

That’s it. Two points in the unit square: a single coordinate in 4-D space. Translate the original image, rotate it, scale it, the hash doesn’t change. The pattern of relative positions is what gets indexed.

A few mild sign and ordering conventions resolve the symmetries (mirror flips, swapping C and D, etc.) so two cameras pointed at the same patch of sky produce hashes that round to the same address even if one is rotated 90° relative to the other. The actual paper has the details and the Bayesian acceptance test; the conceptual point is that an asterism of four stars can be encoded into a tiny scalar fingerprint.

Pre-indexing the entire sky

The cleverness compounds when you realise this work can be done once for the entire sky, ahead of time. The astrometry.net team computed quad hashes for billions of asterisms across multiple survey catalogs, organised them by approximate angular scale, and shipped index files that any client can download.

When your Seestar takes a calibration frame, here’s what happens (roughly; I haven’t decompiled the firmware, but the open-source pipeline is well-documented and ZWO has acknowledged building on astrometry.net and Siril among other open-source components):

- Source extraction. A star detector, usually a Source Extractor-style algorithm, finds bright point sources in the image and rejects obvious junk (cosmic rays, edge artefacts, saturated pixels).

- Quad generation. The algorithm picks asterisms (typically built from the brightest 20–40 detected stars) and computes hashes for each.

- Index lookup. Each hash queries the pre-built index for nearby hashes within a small tolerance. Because the hash is invariant under rotation, scale, and translation, a single matching quad implicitly proposes a full coordinate transform from image pixels to celestial coordinates.

- Hypothesis test. The proposed transform predicts where every other detected star should lie in the catalog. If a large fraction fall within tolerance, the match is real. If not, the hypothesis dies and the next quad is tried.

- Refinement. With the transform locked in, the algorithm fits a more accurate solution, often with polynomial distortion terms (SIP, TPV) for wide-field optics.

Step 4 is framed as a Bayesian decision: the hypothesis is accepted only if its likelihood beats a null model of random catalog matches by an enormous margin (the astrometry.net paper sets the threshold at roughly 10⁹). This is what stops the algorithm from confidently mis-solving images of streetlights, defocused star fields, or sky-glow gradients that happen to produce plausible-looking quads.

What this means in practice

Plate solving is the reason smart telescopes work at all. The Seestar S50 is, mechanically, not a precision instrument. The mount has small backlash, no encoders, and only alt-az tracking. But every 30–60 seconds during an imaging session, the firmware re-solves the most recent frame, computes the offset between where the target should be and where it actually is, and nudges the mount. Drift never accumulates. Field rotation gets folded into the stacking step.

It’s also why polar alignment is essentially gone from the smart-telescope workflow. The mount doesn’t care whether it’s aligned with the celestial pole, because it’s reading its actual orientation from the sky every minute. The same logic applies to the Vespera Pro and the DWARF 3: different optical designs, same algorithmic core.

For amateur astrophotographers, plate solving unlocks something more interesting than a polished UX: you can solve your own images, after the fact, with the public astrometry.net tools, and get a real WCS (World Coordinate System) header back.

Solving your own frames

The simplest path is the free web service at nova.astrometry.net. Drag in a JPEG, FITS, or TIFF of a star field and within a minute you get back:

- The pointing (RA/Dec of the image centre)

- The plate scale (arcseconds per pixel)

- The orientation (angle of north, and a parity check for mirror flips)

- A FITS-WCS header you can paste into any astronomy tool

I do this for every Seestar light frame I care about. The Seestar app gives you a pointing estimate, but the public solver gives you a real WCS that aligns cleanly when stacking with Siril or annotating with Aladin Lite. It also lets you cross-match faint dots in your stack against SIMBAD. A few times now I’ve found an unexpected asteroid trail or a faint galaxy in a frame I shot for something else.

For a local install (offline, fast, useful when batch-processing hundreds of subs), the astrometry.net source on GitHub builds on macOS and Linux. Index files are big (tens of GB for full sky coverage), but you only need the scale range your camera actually shoots. For a Seestar, with a 1.27° × 0.71° field at 250 mm focal length, the 4205–4207 series covers it. For a DSLR with a 50 mm lens, jump up two scale bins. The installation guide is undramatic; the only real footgun is forgetting that the index files want to live somewhere with read permission for your processing user.

Where it breaks

Plate solving has failure modes. Worth knowing them, since “the solve failed” is often surface-level. The cause is usually one of these:

- Too few stars. In sparse star fields (narrow-field imaging near the galactic poles, very short exposures, or heavily light-polluted skies), there may not be enough detected sources to form distinctive quads. Solver returns empty. Fix: stretch and re-stack to push the detection threshold lower, or use a known approximate scale and pointing as a hint to the solver.

- Optical distortion. Wide-field cameras (fisheye, ultra-wide) produce non-affine distortion that breaks naive matching. Astrometry.net handles this by fitting polynomial corrections after an initial match, but the initial match still needs enough roughly-undistorted asterisms in the centre of the frame to bootstrap.

- Low altitude. Below about 20° elevation, atmospheric refraction shifts star positions by tens of arcseconds in a way that’s hard to model without a full atmospheric profile. The solver still works for centring, but the residuals are worse and the WCS isn’t great for absolute astrometry of moving objects.

- Bayesian over-conservatism. The acceptance threshold is tuned to false-positive at vanishingly low rates, which means it occasionally refuses to solve a perfectly valid image when the star field is genuinely ambiguous (e.g. a regular grid of similar-magnitude stars in an open cluster). The fix is “try again with more exposure” or “constrain the search with a manual hint.”

ML-adjacent, not ML

Astrometry.net predates the deep-learning era. It’s geometric, deterministic, no neural network in sight. But it’s an interesting algorithmic ancestor for what’s coming next.

The Vera Rubin Observatory, now in early commissioning with science operations starting later in 2026, will need plate solving on roughly a 30-second cadence for ten years across a 9.6 deg² field. Its Data Management pipeline is descended from the same playbook: pre-indexed reference catalogs, geometric matching, statistical acceptance, though obviously specialised for the survey’s known optics rather than fully blind solving.

There’s an obvious extension nobody yet ships in production: replace the hand-designed quad hash with a learned embedding. A small CNN could plausibly produce a richer descriptor that tolerates optical distortion, missing stars, and hot pixels in one shot. A few academic groups have tried it, and the results are competitive but not dominant. The geometric approach is so cheap and reliable that there’s not much pull. It’s the kind of problem where a deep-learning hammer would buy a sub-percent improvement at the cost of explainability, and astrometry has long memories of mis-calibrated solvers. The field rewards approaches you can write down on a napkin.

That’s the thing about plate solving. Every time I tap a target and watch the Seestar slew, three seconds of geometric hashing, running on a chip in a $500 telescope, turn the entire celestial sphere into a database lookup. The math is older than my career. It still works.